Soil4Climate

Management zone delineation on arable land using multimodal data fusion and evaluation methods

Authors: Luca Joachimmeyer

Published: 9 March 2026

Overview

Agricultural fields naturally exhibit spatial variations. These differences concern soil properties, nutrient content, water balance, or topography and influence plant growth as well as yield potential. To address this variability within a field, areas are divided into management zones.

Often, the derivation of zones is done through manual interpretation of historical yield maps, simplified soil data, digital terrain models, or point-sampled soil investigations. Accordingly, this study investigates the extent to which it is possible to identify homogeneous field areas not only based on two or three information sources and manual estimates, but multimodally, automatically, quickly, and deterministically.

Automated and reproducible processing can significantly reduce the effort and make the process more time-efficient, economical, and resource-friendly. At the same time, it allows for more flexible handling of different data sources and improves the objectivity and traceability of the results. Furthermore, the approach can easily be transferred to larger areas and other use cases.

For the investigations, various data sources (see table) are included, fused with each other, and evaluated against a ground truth created by a soil scientist.

| Data Source | GSD | Raster/Vector |

|---|---|---|

| PlanetScope Super Dove | 3m | Raster |

| Drone | Altitude dependent (~ 5cm) | Raster |

| DEM | 1m | Raster |

| EC_a | 1m - 3m | Vector |

| Soil Estimation | 1:5000 | Vector |

Experimental Pipeline

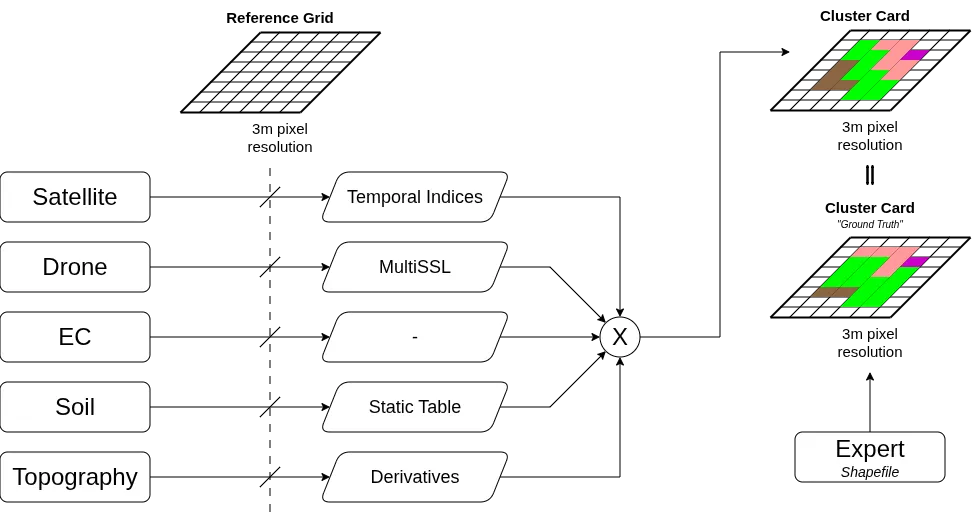

The following figure visualizes the architecture of the overall system. The pipeline is divided into three essential phases: First, the input data is harmonized and projected onto a common reference grid. Subsequently, modality-specific feature extraction takes place, before the feature vectors are fused, transformed into homogeneous zones using unsupervised learning, and finally compared with the ground truth.

Spatial Alignment

The basis for multimodal data integration is a uniform reference grid that divides the continuous field area into discrete cells. This grid serves as a common spatial reference level for all data sources and enables the systematic assignment of features from different modalities to the same geographic positions. The grid cell resolution is 3 m × 3 m, corresponding to the native GSD of the PlanetScope satellite imagery used. This resolution is below the 5 to 30 m typical in the literature and allows for finer spatial discretization for deriving homogeneous management zones.

Feature Extraction from Satellite Imagery

To extract temporal information features, two approaches were investigated: a data-driven deep learning approach based on the Clay Foundation Model and a domain-knowledge-based approach using spectral-temporal vegetation indices.

For the Clay approach, the pre-trained encoder was used for feature extraction, and the resulting features were clustered with k-Means, FCM, and GMM. However, because the input scenes were too small and the required upsampling led to spectral distortions, this approach did not yield satisfactory results.

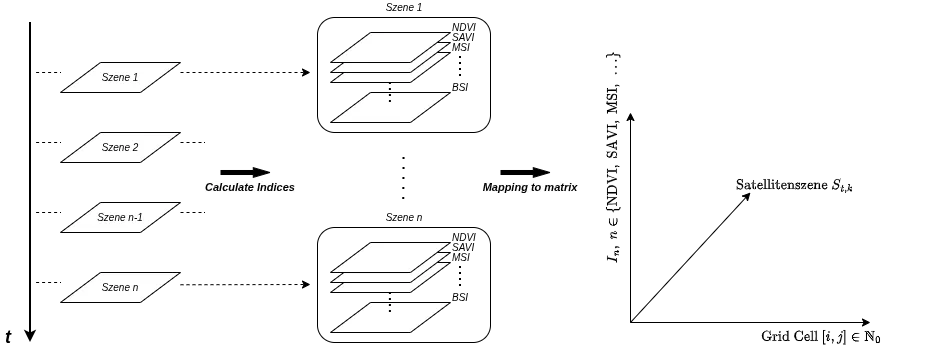

Therefore, for each spatially aligned PlanetScope scene, nine spectral indices were calculated and projected onto the reference grid. NDVI, SAVI, NDRE, NDWI, MSI, NDSI, BSI, BI, and the Red-Green Ratio were used. Due to the lack of a SWIR band, no indices based on it could be considered. This results in the vector matrix .

For further analysis, the median over all acquisition times is formed for each grid cell and each index along the temporal axis. The resulting median composite has the dimension and describes each grid cell by a 9-dimensional feature vector.

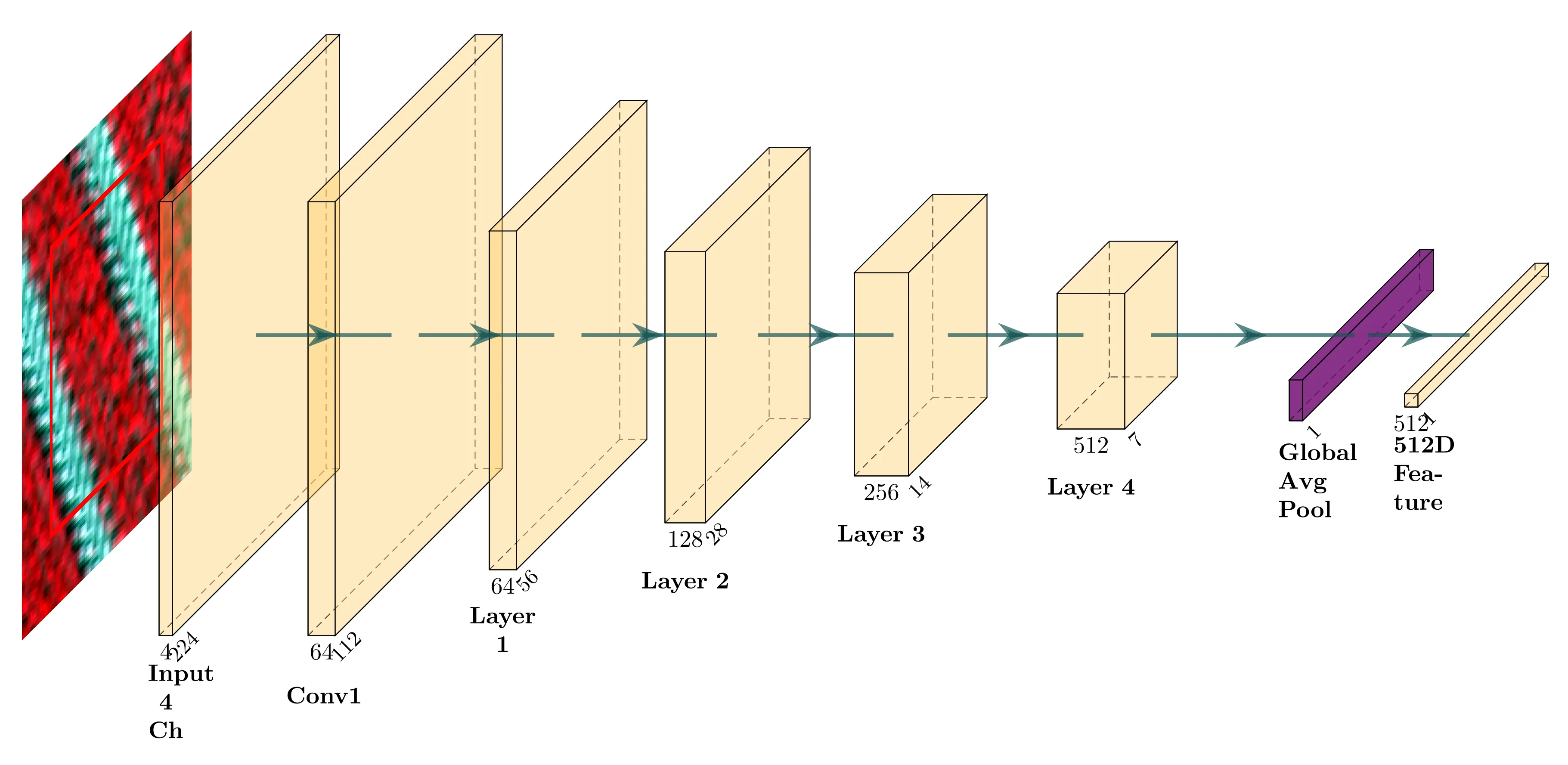

Feature Extraction from Drone Imagery

The drone imagery is based on recordings from the MicaSense Duo RedEdge-MX camera. To extract semantic features, a pre-trained ResNet18 encoder is used, which was pre-trained on the msuav500k dataset using self-supervised learning. Since the model was trained exclusively with four spectral channels, only the Red (668 nm), Green (560 nm), Red Edge (717 nm), and Near-Infrared (842 nm) channels are used from the ten available bands of the drone recordings. The first convolution layer of the network is accordingly designed for four input channels.

For each cell of the reference grid, an image patch is extracted and converted into a feature vector by the encoder. Cells outside the field boundary are treated as and not further considered. The input patches have a size of pixels and contain additional spatial context with in addition to the actual m grid cell. However, the extracted information is assigned exclusively to the central grid cell.

Exemplary input patches for the encoder in false-color representation. The red rectangle marks the target cell in the reference grid with a spatial extent of m, while the surrounding area represents the additional context. The entire patch with a size of pixels serves as input for the ResNet18.

To reduce the high-dimensional feature space and extract the essential variance components, a MULTISPATI-PCA is subsequently performed. The result for each grid cell is a compact feature vector , which maps both high-resolution texture information and spatial coherence.

Feature Extraction from DEM

From the 1 m resolution DEM tiles from the State Office for Geoinformation and Land Surveying Lower Saxony, topographic features relevant to hydrological processes, soil erosion, and nutrient distribution are derived using WhiteboxTools.

For multimodal analysis, these features are aggregated onto the 3 × 3 m reference grid. For each grid cell, the weighted mean values and standard deviations of the contained pixels are calculated to capture both the average relief and its small-scale variability.

The result is a feature vector consisting of ten mean values, ten standard deviations, and exposure strength as a measure of directional consistency.

Feature Extraction from EC_a Data

The conductivity measurements were first interpolated onto a regular point grid using Ordinary Kriging.

Aggregation onto the m reference grid depends on the local point density. If at least four Kriging points lie within a grid cell, their median is used as a robust cell value. If the point coverage is insufficient, the nearest points to the cell centroid are determined and their values are averaged using inverse distance weighting (IDW).

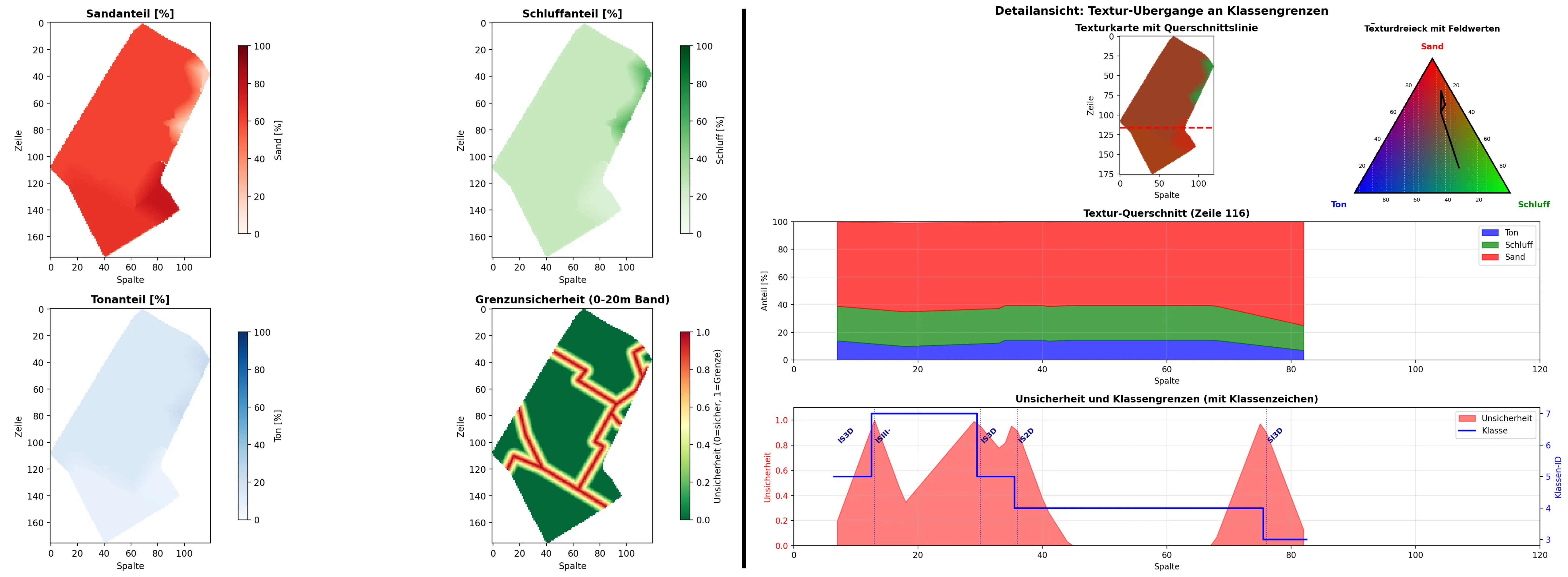

Feature Extraction from Soil Estimation

The official soil estimation is available as vector polygons with categorical class symbols and is therefore converted into quantitative texture parameters. The feature vector comprises the proportions of sand, silt, and clay.

Distance-based blending is applied to model uncertainties at boundaries between different soil classes. For this purpose, only boundaries between polygons with differing class symbols are considered. For each cell center, the distance to the nearest class boundary is calculated. Within a transition zone (), the texture values of the own and neighboring classes are mixed using distance weighting:

A 50/50 mixture occurs directly on the class boundary; as the distance increases, the influence of the neighboring class decreases linearly until only the values of the own class are used outside the transition zone.

Fusion Strategies

To combine the normalized feature vectors, two fusion strategies are investigated, which have established themselves as standard methods in the literature on multimodal data integration. These include weighted concatenation and weighted summation with zero padding.

Weighted Concatenation

In weighted concatenation, the feature vectors from all data sources are appended sequentially. Each source is assigned a weighting factor , where the sum of all weights is normalized to one. For a grid cell, the fused feature vector is given by

where denotes the feature vector of source with dimension .

Weighted Summation with Zero Padding

In weighted summation, the feature vectors of all data sources are first brought to the same dimension using zero padding, starting from the modality with the highest dimension. In the present case, all feature vectors are expanded to the dimensionality of the drone-based features (). For each feature vector with , a padded vector is generated:

Fusion is then carried out by weighted summation of the padded vectors:

where represents the respective weights and the padded feature vectors.

Rasterization of Ground Truth

For validation, the heterogeneous ground truth data is transferred to the reference grid to create a consistent spatial basis for comparison. For point data, assignment is done via KNN with followed by majority voting. For polygon data, the overlap area of each grid cell with the intersecting polygons is calculated.

Results of Data Fusion

To validate the fused data sources, four fusion configurations with field-specific weightings were investigated on both study fields. In all cases, spatial smoothing with a Gaussian kernel ( m, ) was applied.

For each configuration, the two fusion methods Weighted Concatenation and Weighted Sum were evaluated. Since no UAV data was available for “Auf der Hage”, the configuration RS there consists exclusively of satellite data and thus practically corresponds to the satellite baseline.

The following table shows the best fusion results compared to the respective best single source.

| No. | Method | Bergkamp | Auf der Hage | ||

|---|---|---|---|---|---|

| ARI (Δ) | mIoU (Δ) | ARI (Δ) | mIoU (Δ) | ||

| Best Baseline | 0.434 | 0.615 | 0.412 | 0.594 | |

| 1 | Concat | 0.306 (-29%) | 0.444 (-28%) | 0.200 (-51%) | 0.445 (-25%) |

| Sum | 0.347 (-20%) | 0.483 (-21%) | 0.200 (-51%) | 0.445 (-25%) | |

| 2 | Concat | 0.400 (-8%) | 0.507 (-18%) | 0.555 (+35%)*1 | 0.678 (+14%)*2 |

| Sum | 0.388 (-11%) | 0.507 (-18%) | 0.541 (+31%) | 0.674 (+13%) | |

| 3 | Concat | 0.461 (+6%) | 0.544 (-12%) | 0.527 (+28%) | 0.664 (+12%) |

| Sum | 0.473 (+9%)*3 | 0.548 (-11%) | 0.570 (+38%)*2 | 0.686 (+15%)*4 | |

| 4 | Concat | 0.479 (+10%) | 0.556 (-10%) | 0.561 (+36%) | 0.698 (+17%) |

| Sum | 0.480 (+11%)*2 | 0.555 (-10%) | 0.606 (+47%)*4 | 0.731 (+23%)*4 | |

*1 (GMM | 3) *2 (k-Means | 3) *3 (k-Means | 5) *4 (FCM | 3)

The results show a clear field dependency of the fusion. On the “Bergkamp” field, the best agreement with the ground truth is achieved by fusing all modalities (, compared to the best single source). A similarly positive, though smaller, improvement is obtained for DEM+EC with Weighted Sum (). In contrast, the pure remote sensing configuration (RS) remains clearly below the baseline.

On the “Auf der Hage” field, segmentation improves in almost all fusion configurations compared to the best single source. The best configuration here is also the fusion of all modalities with Weighted Sum (, ; , ). This particularly highlights the added value of combining complementary data sources.

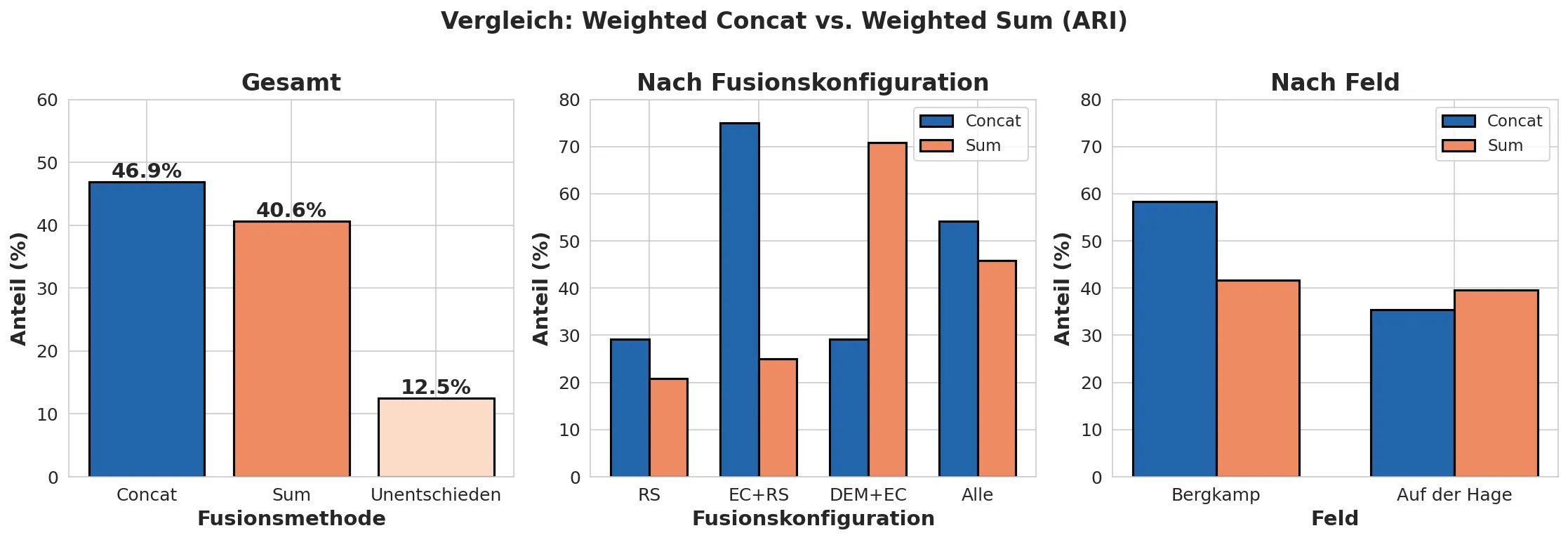

The following figure compares which of the two fusion methods achieves the higher ARI more frequently across all parameter combinations.

In addition to the fusion method, the number of clusters also influences the segmentation quality. Across all configurations, k-Means and FCM achieve the best results predominantly at , while GMM is overall weaker and more unstable. With an increasing number of clusters, the average quality measures tend to decrease.

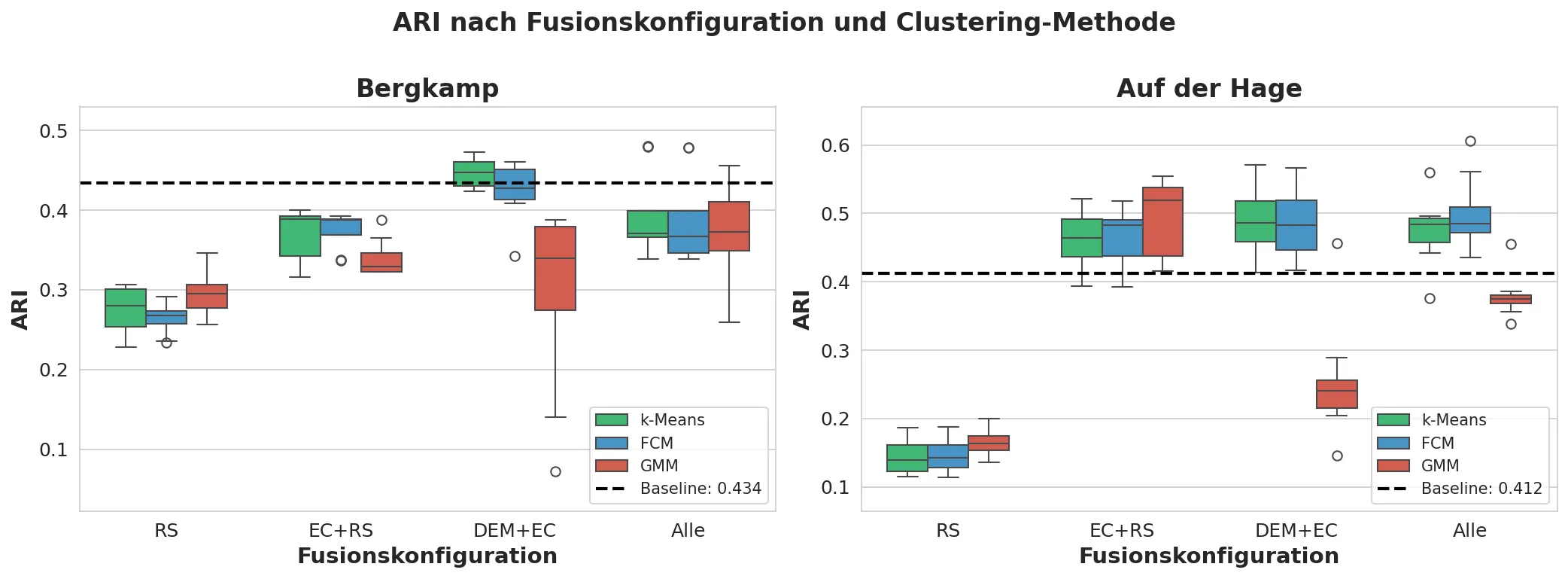

The following figure shows the ARI distribution by fusion configuration and illustrates which modality combinations are particularly suitable on the two study fields.

It can be seen that the sole use of remote sensing data (RS) yields the weakest agreement on both fields. The highest median values are achieved primarily for DEM+EC and – on “Auf der Hage” – additionally for EC+RS. Across all configurations, k-Means and FCM prove to be robust, while GMM shows significantly higher volatility.

Current and Future Work

- Using Cross-Modal Attention models for relationships and correlations between modalities

- Application of further extraction processes for the remote sensing modalities

- Investigations of XAI along the pipeline to validate results and evaluate the significance

Collaboration

We collaborate with:

- agricultural robot manufacturers

- research institutes

- test fields

- standardization bodies

If you are interested in collaboration, please contact the

Intelligent Agricultural Systems Group – Hochschule Osnabrück.

Acknowledgments and Funding

This research was supported by the research project “Soil 4 Climate” funded by the Federal Ministry of Agriculture, Food and Regional Identity (BMLEH) based on a decision of the Parliament of the Federal Republic of Germany via the Federal Office for Agriculture and Food (BLE).